AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Java webscraper3/31/2023

A web scraper helps gather and format data to collect information. In other words, it gives users the data and logic but they have to put them together to see the whole, rendered web page.Īn example of such a page would be as simple as: Ĭontent: "Available 2024 on scrapfly.io, maybe."ĭocument. If Java is not installed and running on your computer, the website wont work. If a real headless browser able to manage any recent web features, would exist, it would mean a team would have.

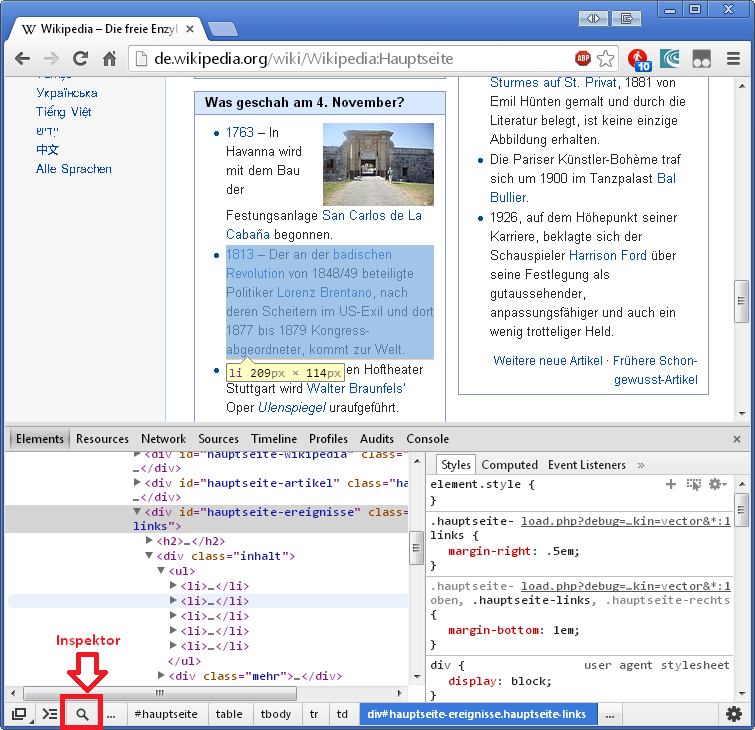

JavaScript has become one of the most popular and widely used languages due to the massive improvements it has seen and the introduction of the runtime known as NodeJS. A Web Scraper is a program that quite literally scrapes or gathers data off of websites. NodeJs - For Node.js we have the Kindle book Scraping Javascript-dependent website with Puppeteer by Igor Savinkin, which at only 55 pages in length only gives a quick overview of web scarping with Puppeteer. Kevin Sahin 02 August 2022 (updated) 23 min read. Or you can use an alternative method like this one, as this seems to be the path someone else go before you. However, as it was published in 2013 it is very outdated now as web scraping and Java has moved on a lot in the last 10 years. On the left we see what the browser sees on the right is our http webscraper - where did everything go?ĭynamic pages use complex javascript-powered web technologies that unload processing to the client. Either you contribute to HtmlUnit to produce a version of HtmlUnit not using the missing dependencies from Android. Why can't my scraper see the data I see in the web browser? One of the most commonly encountered web scraping issues is: Or you can use an alternative method like this one, as this seems to be the path someone else go before you. What are existing available tools and how to use them? And what are some common challenges, tips and shortcuts when it comes to scraping using web browsers. Either you contribute to HtmlUnit to produce a version of HtmlUnit not using the missing dependencies from Android.

Some advanced options also include the POST and the PUT methods.

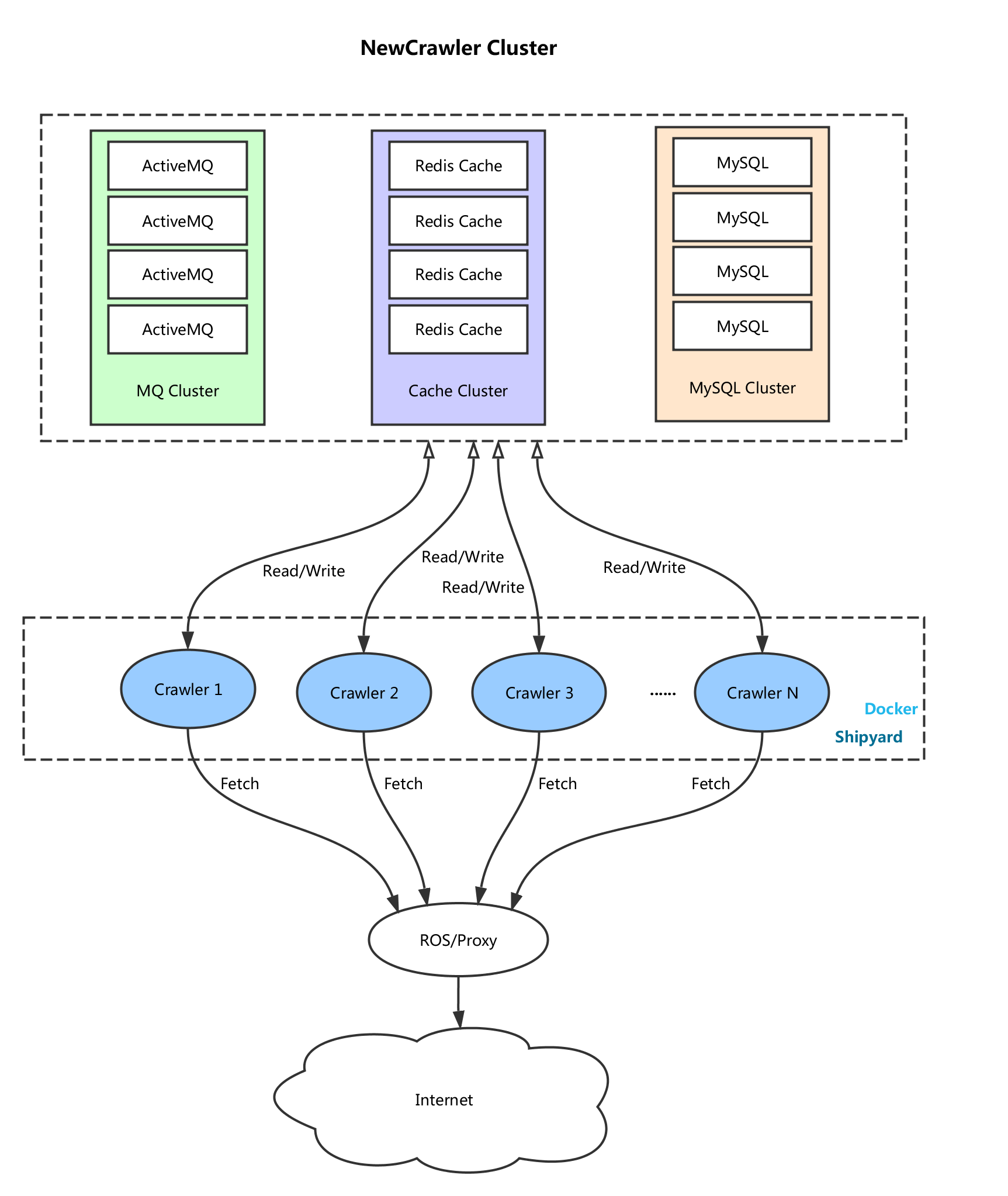

The library provides a fast, ultra-light browser that is headless (ie has no. Web scrapers use the GET method for HTTP requests, meaning that they retrieve data from the server. In this tutorial, we'll take a look at how can we use headless browsers to scrape data from dynamic web pages. Jaunt is a Java library for web-scraping, web-automation and JSON querying. The data is then converted into a structured format that can be loaded into a database. This commit does not belong to any branch on this repository, and may belong to a fork outside of the repository. Public static final String USER_AGENT = "Mozilla/5.0 (Macintosh Intel Mac OS X 10_11_4) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/.94 Safari/537.Many modern websites in 2023 rely heavily on javascript to render interactive data using frameworks such as React, Angular, Vue.js and so on which makes web scraping a challenge. Web scraping (or data extraction) software is used to extract unstructured data from web pages. But when I run it, its terminate every time. In this post, we will walk you through on how to set up a basic web crawler in Java, fetch a site, parse and extract the data, and store everything in a JSON structure. I want to crate a prgram where I can search a keyword in Google and save the search result into csv file. First, Java is a versatile language that can be used on both Windows and Apple platforms.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed